Script for normalizing and finding correlations across variables in a numeric dataset. Data can be analyzed as a whole or split into ‘n’ many subsets. When split, normalizations are calculated and correlations are found for each subset.

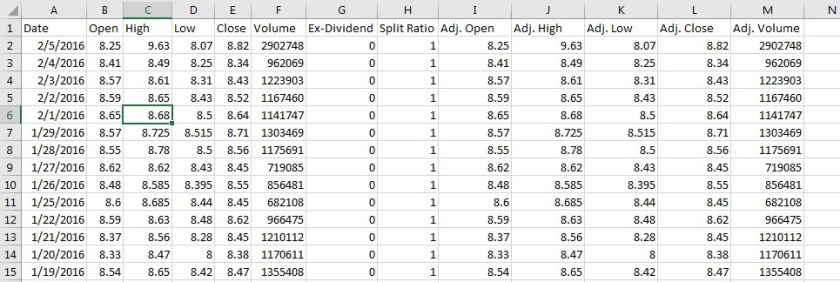

Input is read from a .csv file with any number of columns (as shown below). Each column must have the same number of samples. Script assumes there are headers in the first row.

import numpy as np

#Divides a list (or np.array) into N equal parts.

#http://stackoverflow.com/questions/4119070/how-to-divide-a-list-into-n-equal-parts-python

def slice_list(input, size):

input_size = len(input)

slice_size = input_size // size

remain = input_size % size

result = []

iterator = iter(input)

for i in range(size):

result.append([])

for j in range(slice_size):

result[i].append(iterator.__next__())

if remain:

result[i].append(iterator.__next__())

remain -= 1

return result

#Functions below are from Data Science From Scratch by Joel Grus

def mean(x):

return sum(x)/len(x)

def de_mean(x):

x_bar=mean(x)

return [x_i-x_bar for x_i in x]

def dot(v,w):

return sum(v_i*w_i for v_i, w_i in zip(v,w))

def sum_of_squares(v):

return dot(v,v)

def variance(x):

n=len(x)

deviations=de_mean(x)

return sum_of_squares(deviations)/(n-1)

def standard_deviation(x):

return np.sqrt(variance(x))

def covariance(x,y):

n=len(x)

return dot(de_mean(x),de_mean(y))/(n-1)

def correlation(x,y):

stdev_x=standard_deviation(x)

stdev_y=standard_deviation(y)

if stdev_x >0 and stdev_y>0:

return covariance(x,y)/stdev_x/stdev_y

else:

return 0

#Read data from CSV

input_data=np.array(np.genfromtxt(r'C:\Users\Craig\Documents\GitHub\normalized\VariableTimeIntervalInput.csv',delimiter=",",skip_header=1))

var_headers=np.genfromtxt(r'C:\Users\Craig\Documents\GitHub\normalized\VariableTimeIntervalInput.csv',delimiter=",",dtype=str,max_rows=1)

#Determine number of samples & variables

number_of_samples=len(input_data[0:,0])

number_of_allvars=len(input_data[0,0:])

#Define number of samples (and start/end points) in full time interval

full_sample=number_of_samples

full_sample_start=0

full_sample_end=number_of_samples

#Define number of intervals to split data into

n=2

dvar_sublists={}

max_sublists=np.zeros((number_of_allvars,n))

min_sublists=np.zeros((number_of_allvars,n))

subnorm_test=np.zeros((full_sample_end, number_of_allvars+1))

#Slice variable lists

for dvar in range(0,number_of_allvars):

dvar_sublists[dvar]=slice_list(input_data[:,dvar],n)

for sublist in range(0,n):

max_sublists[dvar,sublist]=np.max(dvar_sublists[dvar][sublist])

min_sublists[dvar,sublist]=np.min(dvar_sublists[dvar][sublist])

var_interval_sublists=max_sublists-min_sublists

#Normalize each sublist.

for var in range(0, number_of_allvars):

x_count=0

for n_i in range(0,n):

sublength=len(dvar_sublists[var][n_i])

for x in range(0,sublength):

subnorm_test[x_count,var]=(dvar_sublists[var][n_i][x]-min_sublists[var,n_i])/var_interval_sublists[var,n_i]

subnorm_test[x_count,6]=n_i

x_count+=1

var_sub_correlation=np.zeros((n,number_of_allvars,number_of_allvars),float)

#Check for correlation between each variable

for n_i in range(0,n):

for i in range(0,number_of_allvars):

icount=0

for j in range(0,number_of_allvars):

jcount=0

starti=icount*len(dvar_sublists[i][n_i])

endi=starti+len(dvar_sublists[i][n_i])

startj=icount*len(dvar_sublists[j][n_i])

endj=startj+len(dvar_sublists[j][n_i])

var_sub_correlation[n_i,i,j]=correlation(subnorm_test[starti:endi,i],subnorm_test[startj:endj,j])

#Writes to CSV

np.savetxt(r'C:\Users\Craig\Documents\GitHub\normalized\sublists_normalized.csv',subnorm_test, delimiter=",")

print(var_sub_correlation, 'variable correlation matrix')